The 2026 Ponemon Cost of Insider Risks research captures a moment of transition for insider risk management. Organizations are investing more, containment is getting faster, and dedicated programs are showing measurable returns. At the same time, the environment those programs operate in has changed, especially as generative AI and AI agents become part of everyday work.

On the cost side, insider risk incidents now average US$19.5M annually, up from US$17.4M in 2024.

The standout driver is negligence — the familiar scenarios security leaders see every day: mistakes, process gaps, and unmanaged workflows that become expensive at scale. What’s changed is the velocity: widespread unauthorized AI use, often without visibility or governance.

The opportunity in 2026 is not to “start over.” It’s to double down on what already works, extending insider risk management to AI risk management with behavioral oversight, classification, and governance.

Key findings

- The cost of insider risk continues to climb

- AI use is outpacing governance

- Shadow AI is amplifying negligence-related exposure

- Insider risk programs are delivering clear ROI

- Lack of collaboration and poor ROI translation hinder justification for insider risk funding

- Faster containment is reducing overall losses

- Behavioral intelligence and AI are driving faster insider risk detection and mitigation

How AI is changing the insider risk management landscape

AI is already reshaping how information moves inside the enterprise. The issue isn’t whether it’s happening — it’s whether we’re accounting for it.

Ninety-two percent of organizations say generative AI has changed how employees access and share data. Nearly three-quarters worry unauthorized AI use is creating exposure they can’t see.

But oversight lags reality.

Only 18% have fully integrated AI governance into insider risk programs. And with just 13% formally adopting AI at the enterprise level, most usage is occurring outside defined guardrails.

The takeaway: AI has become part of the workforce, and updating governance and classification to reflect that reality strengthens both trust and control. When organizations account for AI‑driven behaviors within their existing frameworks, they gain the ability to track data movement more accurately, support safer experimentation, and guide adoption with intentionality. It’s a shift that transforms AI from a visibility challenge into a governed capability.

Insider threat intelligence: negligence and shadow AI

For the 2026 report, Ponemon asked DTEX i³ to examine whether rising employee negligence is tied to AI use. Their investigations reveal a consistent dynamic: employees aren’t acting with intent to harm — they’re moving fast, relying on tools and workflows that sit well outside approved boundaries. And in that gap, repeatable leakage paths have formed.

The report dives into these exposure patterns to understand where visibility breaks down, and how the risk profile shifts as agents enter workflows.

The takeaway: AI risk management can no longer be separated from insider risk management. The same pathways driving productivity now represent insider exposure points. Security leaders need visibility into these AI‑driven behaviors, because when those signals disappear, containment slows and losses grow.

The ROI of insider risk management

While costs are rising, the report also offers concrete evidence that insider risk management can deliver ROI at scale.

Eighty-two percent of organizations have or plan to have an insider risk management program.

Among those already running an insider risk program (63%), respondents report it helped them avoid an average of seven insider security incidents over the past 12 months — equating to roughly US$8.2M in avoided breach-related costs.

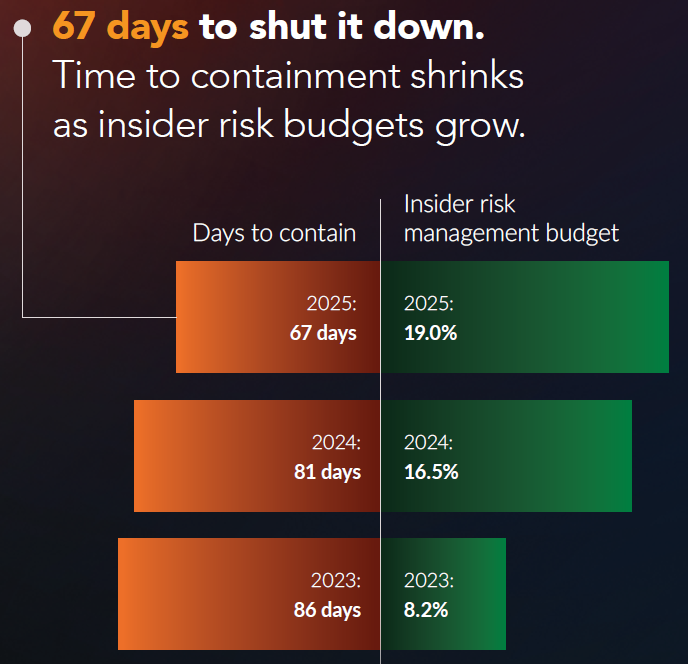

In addition, insider risk spend has risen to 19% of IT security budgets, a signal that many organizations now treat this as a core discipline rather than an adjunct function. As budgets increased, containment times dropped significantly.

The faster it takes to contain, the lower the costs: incidents contained within 30 days cost $14.2M annually, compared to $21.9M when containment exceeds 90 days.

The takeaway: insider risk programs are proving their value in hard numbers. With nearly a fifth of security budgets now dedicated to this discipline, the results show that focused investment — particularly in behavioral analytics and AI-enabled detection — translates into faster response and materially lower exposure.

Justifying budget for insider risk management

Findings in the report show that budget growth is happening, but not evenly. This matters, because insider risk only works when ownership is truly shared across functions. Many organizations have increased investment to secure scarce talent and strengthen prevention and detection, yet a meaningful portion still report funding gaps that limit program maturity.

The report also highlights a consistent friction point: budget becomes harder to secure when collaboration across security, HR, legal, and compliance is weak, or when ROI isn’t framed in terms leaders recognize and trust.

The takeaway: budget conversations get significantly easier when insider risk programs are anchored in shared workflows, shared visibility, and shared metrics. Alignment, not technology alone, is what turns insider risk management from a cost request into a strategic investment.

The technologies that enable effective AI and insider risk management

Across the findings, one theme stands out: the capabilities driving the strongest outcomes are those that cut noise, sharpen decisions, and speed containment.

AI‑enabled security tools are moving from experimentation into real operational use. More than 40% of organizations now use AI to detect or prevent insider risks, and early adopters report meaningful gains, including fewer false positives and faster containment.

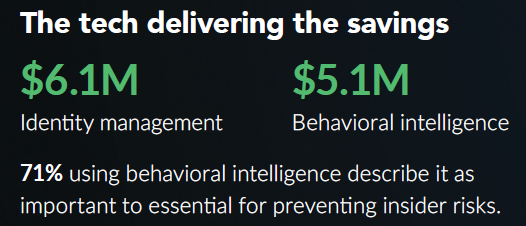

Identity management and behavioral analytics are also becoming foundational, delivering the biggest impact at reducing insider risk costs.

The takeaway: capabilities that combine strong governance with high‑fidelity behavioral and AI‑driven insights are now the clearest path to faster containment and better outcomes. The organizations seeing the biggest returns are those that pair access controls with contextual understanding — not just detecting activity but interpreting it.

What to prioritize in 2026

Based on the 2026 findings, the priorities are straightforward:

- Make AI part of governance, not an exception. Extend classification and policy to prompts, outputs, meeting summaries, and agent actions; define approved AI use cases and red lines so AI‑driven work inherits existing controls.

- Close the AI visibility gap. Ensure you can answer what moved, where, and why across sanctioned and unsanctioned AI use by instrumenting traceability for data flows, prompts/uploads, and resulting artifacts.

- Treat AI agents as insider risks. Apply privileged‑user standards to agents: scoped access, auditable logging, change control, named owners, and pre‑deployment testing for over‑permissioning and data leakage.

- Prioritize behavioral context over alert volume. Deploy behavioral analytics on high‑value workflows to reveal intent and sequence, reducing false positives and accelerating containment.

- Prove ROI with outcome metrics. Report incidents avoided, time to contain by severity band, and downstream loss prevented. Tie budget asks to these outcomes so investment decisions survive scrutiny.

Conclusion

In 2026, insider risk won’t be defined by whether AI exists in your environment — it already does. The differentiator will be whether your program can make AI activity observable, attributable, and governable at the same standard you expect for human insiders. If visibility is the battleground, then telemetry, classification, and cross-functional operating rhythm are the advantage.

Download the full 2026 Ponemon Cost of Insider Risks Global Report to quantify ROI, benchmark containment, and map your 2026 priorities.

FAQ: what the report tells us

Insider risk management delivers measurable ROI by preventing costly insider threats and reducing containment time. In the 2026 Ponemon research, organizations with an insider risk management program said they avoided an average of seven insider incidents in 12 months, equal to about US$8.2 million in avoided breach-related costs. The strongest returns come from faster containment and better visibility, particularly across shadow AI and human risk.

Organizations should measure insider risk management using business and security outcomes. The most useful metrics are incidents avoided, time to contain, financial loss prevented, and reductions in annual insider threat costs. For AI risk management and AI security, teams should also track shadow AI activity, policy violations, and risky data movement. Faster containment and clearer cross-functional metrics show whether the program is working.

Shadow AI is the use of AI tools outside approved governance, security, and compliance controls. It increases insider risk because employees may enter sensitive data into systems the organization cannot fully monitor, classify, or govern. That creates visibility gaps, weakens AI security, and raises the chance of data leakage. In practice, shadow AI often turns everyday negligence into higher-cost insider threats.

The technologies that reduce insider risk costs most are identity management, behavioral analytics, and AI-enabled security tools. These capabilities strengthen insider risk management by improving visibility, reducing false positives, and speeding containment. For AI risk management, they also help organizations detect shadow AI activity, monitor risky behavior, and interpret context more accurately. Better context leads to faster response and lower insider threat costs.

Subscribe today to stay informed and get regular updates from DTEX