Executive summary (TL;DR)

- This advisory examines how AI note-taking tools can enable covert data exfiltration through legitimate services.

- This matters now because AI assistants are spreading faster than enterprise controls and visibility.

- Risk is highest for organizations handling sensitive data, especially in remote and hybrid environments.

- In this investigation, a remote finance employee used a personal account to move sensitive data into a cloud-based AI tool.

- Primary risks include hidden data loss, weak attribution, unsecured sharing, and added exposure through wearables and personal webmail.

- Organizations should prioritize stronger AI governance and monitoring for sensitive data movement.

Threat overview

The integration of artificial intelligence is reshaping how organizations work. It can improve efficiency, automate routine tasks, and help employees process information faster. It can also introduce new exposure when sensitive data moves into services the organization does not fully govern.

This advisory focuses on data exfiltration through AI note-taking and transcription tools. In the investigated case, a disgruntled employee used a personal account with cloud-based AI note-taking tools to aggregate and exfiltrate sensitive company data. Because the activity occurred within a legitimate service and through a personal account, detection was more difficult and intent was easier to dispute.

The scenario reflects a growing AI note-taking risk for security teams: data can be captured during meetings, summarized outside approved workflows, exposed through unsecured links, or synchronized across devices that sit outside normal monitoring. This makes the issue both a technology problem and an insider threat problem.

AI Note-taking tools and their risks

AI note-taking tools — such as Fireflies.ai, Otter.ai, and similar meeting assistant platforms — offer transcription, summarization, and searchable records of voice conversations. While these tools improve productivity, they introduce significant data exfiltration risks when used outside enterprise controls.

Key risks associated with AI note-taking tools include:

- Unsecured sharing links: Summaries and transcripts can be published via links accessible to anyone, bypassing access controls.

- Personal account use: Employees using personal accounts move data outside enterprise visibility entirely.

- Cloud synchronization: Outputs are stored in third-party cloud environments the organization does not govern.

- Wearable AI devices: Companion hardware can capture meeting audio and sync to personal cloud storage without IT oversight.

- Bot participants: Some tools join meetings as automated bots, recording content without explicit per-session approval.

Why this matters now

Organizations are still working out how generative AI is being used across the business. That creates gaps in policy, monitoring, and employee awareness. AI note-taking assistants and related wearable devices can quietly expand the attack surface if security teams do not track how they are introduced, what they capture, and where the resulting data is stored.

DTEX investigation and indicators

A recent investigation found that an employee was using AI note-taking assistant tools to exfiltrate sensitive company data. The individual worked in a remote finance role at a banking and investment firm. The investigation combined endpoint indicators with in-person interviews involving colleagues and management.

The case was identified “left of boom”, enabling the organization to contain most of the attempted exfiltration and begin legal action to reduce remaining risk.

A further finding involved AI-generated audio files reportedly created to support leverage in a severance dispute. One file was disguised to resemble a Zoom recording and was presented as evidence of discriminatory comments by the employee’s manager.

This detailed investigation walkthrough and accompanying indicators are classed as “limited distribution” and are only available to approved insider risk practitioners. Login to the customer portal to access the indicators or contact the i³ team to request access.

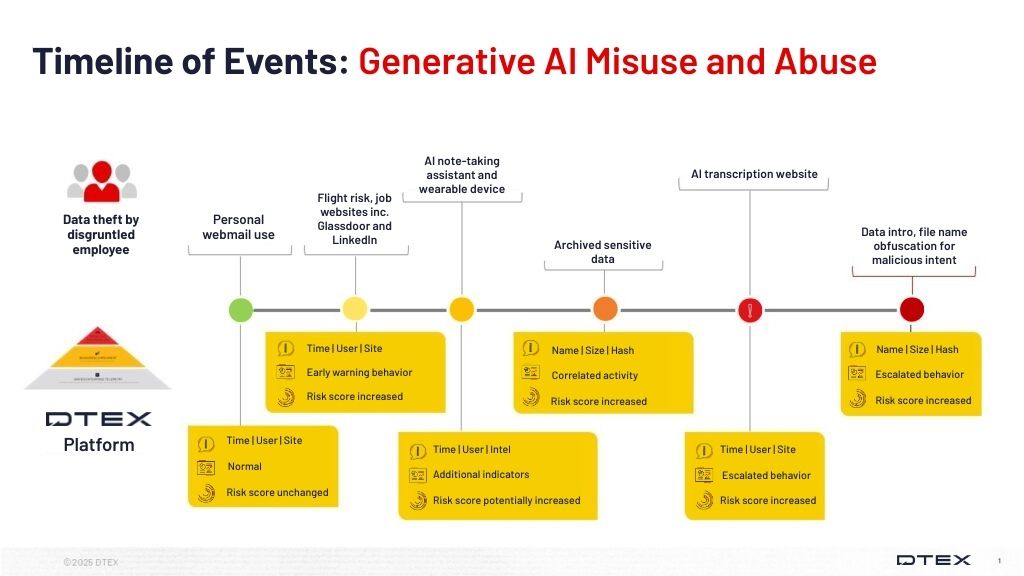

High-level investigative flow

| Stage | Description |

| Potential risk indicator, aggregation | AI transcription platform use: The user accessed an AI transcription site, fireflies.ai, that offers transcription, summarization, search, and analysis of voice conversations, including the ability to publish summaries through unsecured links. These services can also join meetings as bots when permitted. |

| Aggregation | Archived sensitive data: File origin analysis showed that the materials had been copied from a folder labeled as sensitive. Although the folder itself indicated higher sensitivity, the files lacked consistent markings or classifications. |

| Potential risk indicator | Personal webmail and personal account use: Personal email use appeared connected to access to online services. This increased the likelihood that data was moved into AI tools outside enterprise visibility and control. |

| Potential risk indicator | Low-visibility flight risk: Investigators did not find evidence of job applications submitted from the corporate device. The absence of obvious activity contributed to a lower-risk appearance while masking more deliberate behavior. |

| Circumvention, exfiltration | AI wearables: Interviews revealed use of an AI note-transcribing wearable device. The device synchronized with a phone and cloud storage, creating another path for exposing sensitive company information and PII during meetings. |

| Potential risk indicator | Data introduction: The user interacted with a file introduced through an external link. Investigators suspected the downloaded file may have been connected to a discrimination claim strategy. |

| Obfuscation | File renaming: The user renamed a file to match Zoom naming conventions, reducing the chance that the file would draw scrutiny. |

Key indicators security teams should monitor

- Access to AI note-taking, transcription, and meeting-assistant services

- Personal account use with cloud-based AI services

- Sensitive file access followed by clipboard or upload activity

- Personal webmail used alongside research or file-handling activity

- Use of wearable AI assistants in meetings

- Files introduced from external links

- File renaming patterns designed to mimic common collaboration tools

- HTTP upload activity associated with AI services

Insider threat profile

The investigated user displayed behaviors consistent with a deliberate insider threat rather than casual policy misuse.

Observed characteristics

- Remote employee in a finance role with access to sensitive information

- Technically capable and operationally cautious

- Comfortable using both corporate and non-corporate channels

- Used personal accounts to create plausible deniability

- Avoided obvious on-device behaviors that might elevate risk scoring

- Introduced external material into the environment

- Used AI-generated content in an attempt to strengthen a workplace claim

Operational significance

This profile matters because the activity blended into normal business tooling. The employee did not rely on obviously malicious software or a noisy exfiltration path. Instead, they used legitimate AI-enabled services, weakly governed workflows, and social context to reduce detection.

Insider threat persona

Disgruntled, technically capable insider using legitimate AI services

This persona is a trusted employee who understands where monitoring is strong and where it is weak. They avoid overtly suspicious behavior on the corporate device, use personal accounts and cloud-based services to move data, and rely on common collaboration patterns to make activity appear routine.

In this case, the individual also used AI-generated material as leverage in an employment dispute. That combination of data handling, deception, and personal motive increases both legal and operational risk.

This persona should not be treated as a broad stereotype. It is a behavior-based pattern drawn from the investigated activity: access to sensitive data, use of legitimate AI tools outside approved controls, concealment through normal-looking workflows, and attempts to strengthen personal leverage.

Mitigations: what organizations should do now

Organizations should treat AI note-taking risk as both an AI governance issue and an insider risk detection problem. The immediate priority is to reduce blind spots around how data moves into AI-enabled services.

Govern AI note-taking tools and wearables

- Review whether AI note-taking assistants, transcription tools, and wearable AI devices are permitted, restricted, or prohibited

- Establish a clear policy for personal electronic devices and wearables in meetings and other sensitive settings

- Define when personal accounts may not be used for business-related AI services

Improve visibility into AI data exfiltration paths

- Monitor access to AI note-taking, transcription, and meeting-assistant platforms

- Expand HTTP inspection and filtering where appropriate

- Review file upload activity tied to AI and collaboration services

- Watch clipboard activity near sensitive files and AI platform access

- Use contextual telemetry to understand whether users are aggregating data before upload

Strengthen sensitive data handling

- Audit and maintain named lists for critical assets and crown jewels

- Improve file classification coverage so sensitive content is easier to identify during investigations

- Review whether “sensitive” folders contain files that lack file-level classification or labeling

Add context from HR and enterprise data sources

- Ingest HR data that helps identify role context, contractor status, known leavers, and disabled accounts

- Use organizational context to improve triage and reduce false positives

- Pair behavioral detections with manager, peer, or case-based context when appropriate

Build repeatable response procedures

- Define an investigation path for suspected AI data exfiltration

- Include legal, HR, and security stakeholders where employee misconduct or sensitive claims may be involved

- Test and tune detections before broad deployment, especially in large environments

Raise employee awareness

- Train employees on acceptable AI use, data privacy, and the risks of summarization links, personal accounts, and AI-enabled meeting capture

- Clarify that convenience features can create real exposure when they capture, store, or share sensitive material outside approved workflows

Investigation support

This advisory includes limited-distribution reporting available only to approved insider risk practitioners. To request access to the redacted material, log in to the customer portal or contact DTEX i³.

For organizations assessing suspected related activity, DTEX i³ can provide additional intelligence, indicator support, and investigative guidance. Behavioral detections should be tested and tuned prior to enterprise-wide deployment, particularly in large environments where scale can affect signal quality and operational effectiveness.

Additional resources

- DTEX i3 2024 Insider Risk Investigations Report

- Beware of fake AI tools masking a very real malware threat

- ESET H1 2024 Threat Report

FAQ

Security teams can detect AI data exfiltration by monitoring sensitive file access, clipboard use, HTTP uploads, personal webmail, and visits to AI note-taking or transcription services. Personal accounts and unsecured summaries can create hidden data exfiltration paths.

AI note-taking tools create insider threat risk because they can capture meetings, summarize sensitive discussions, and store outputs in cloud services outside enterprise control. Risk increases when employees use personal accounts, unsecured links, or unapproved AI assistants.

Key indicators include access to AI note-taking tools, personal account use, sensitive file aggregation, clipboard or upload activity, personal webmail, wearable AI assistants, externally introduced files, and renamed files designed to resemble trusted collaboration tools.

Start by governing AI note-taking tools, personal accounts, and wearable AI devices. Then improve monitoring for sensitive file access, clipboard activity, HTTP uploads, and AI service usage to detect insider threat activity before data exfiltration escalates.

Get Threat Advisory

Email Alerts