Meet Roger. He’s not just a character; he’s a pattern, and almost every organization has a “Roger” somewhere inside their environment. It might be a developer who quietly uploads code archives to personal Git projects, a departing employee who collects “just in case” design files, or a contractor account that nobody realized still has access to sensitive data. That’s where DTEX’s Risk-Adaptive DLP provides support. It gives the story behind those moves by surfacing behavior, context, and risk in real-time so you can intervene before compromise.

If you run a security program today, you probably already have a Roger. On paper, he looks normal. He uses standard tools and follows familiar workflows. He knows how to move data without tripping the obvious wires. That’s why we’re sharing our “Meet Roger” video series: to give a face to the small choices and quiet workarounds that turn everyday activity into insider risk.

In chapter one, we watch Roger walk through simple evasions. He renames files, zips them, encrypts them. Static controls miss the pattern while the SOC drowns in alerts. The lesson isn’t that Roger is clever. It’s that most insider risk is not a single dramatic event. It’s a sequence of small moves that add up to intent.

The second chapter in the “Meet Roger” series focuses on that missing story. Watch how Risk-Adaptive DLP ties protection to behavior, so controls adapt as risk changes instead of after your data’s already gone. 👇

Why static DLP fails, and how risk-adaptive DLP closes the gaps

Static DLP fails for the same reason Roger succeeds. Traditional DLP waits for a clear, obvious violation. Most real incidents don’t look like that. They start as normal work that quietly turns into risky behavior.

Roger doesn’t need a zero day. He needs a few ordinary steps that, in isolation, look fine: copying a folder, renaming a file, or pasting company information into a GenAI prompt. By the time a static rule finally triggers, the story is already in motion.

According to the 2025 Verizon Data Breach Investigations Report , most confirmed breaches still hinge on the human element, from misuse of legitimate access to errors and social engineering, not on exotic zero days. This human‑driven reality breaks traditional DLP in three places:

- Sensitive data is often unstructured. Source code, screenshots, recordings, images, and videos rarely match a clean keyword pattern or static data classification.

- Exfiltration paths have multiplied. Personal webmail, cloud drives, browser uploads, and GenAI prompts all carry risk.

- The most important signal isn’t the final transfer. It’s the build up. Large data collection, unusual access, odd working hours, or new tools are all behavioral signals.

Static DLP is built around a final data transfer. As a result, teams respond late, endlessly tune rules, and still miss intent. Risk-adaptive DLP addresses that head on, moving to a proactive approach.

Risk-adaptive DLP: dynamic protection tied to dynamic risk

DTEX approaches data loss as a human, workflow, and data problem at the same time.

At the center is the DTEX Risk-Adaptive Framework™, powered by dynamic risk groups. Users move through risk bands, like Low, Elevated, Watch, and Quarantine, as behavioral signals stack up. Policies follow these groups and adapt automatically when risk changes.

Dynamic risk groups dial

This isn’t a cosmetic shift. It changes how teams design control.

Instead of asking “Should we block this action for everyone?” you can ask “What is the right intervention for this user, in this moment, given their trajectory?” That’s how you reduce noise while still catching high intent behavior.

Two ways to identify sensitive data, including unstructured IP

Most DLP programs start and end with content inspection. That can help for regulated data like payment card information or health records, but it struggles to keep up with intellectual property (IP) and non text formats.

DTEX uses two complementary methods:

- Content-based classification: detects regulated data on the device. It supports categories like PII, PHI, and PCI while keeping inspection local.

- Behavior-based classification: uses AI-driven models and behavioral analytics to infer sensitivity based on file attributes and behavior. It applies to unstructured formats such as images, videos, and source code, as well as rich media like recordings and design files.

Because these methods work together, teams can protect IP crown jewels earlier. They can also focus less on brittle, regex-heavy policies that drive false positives.

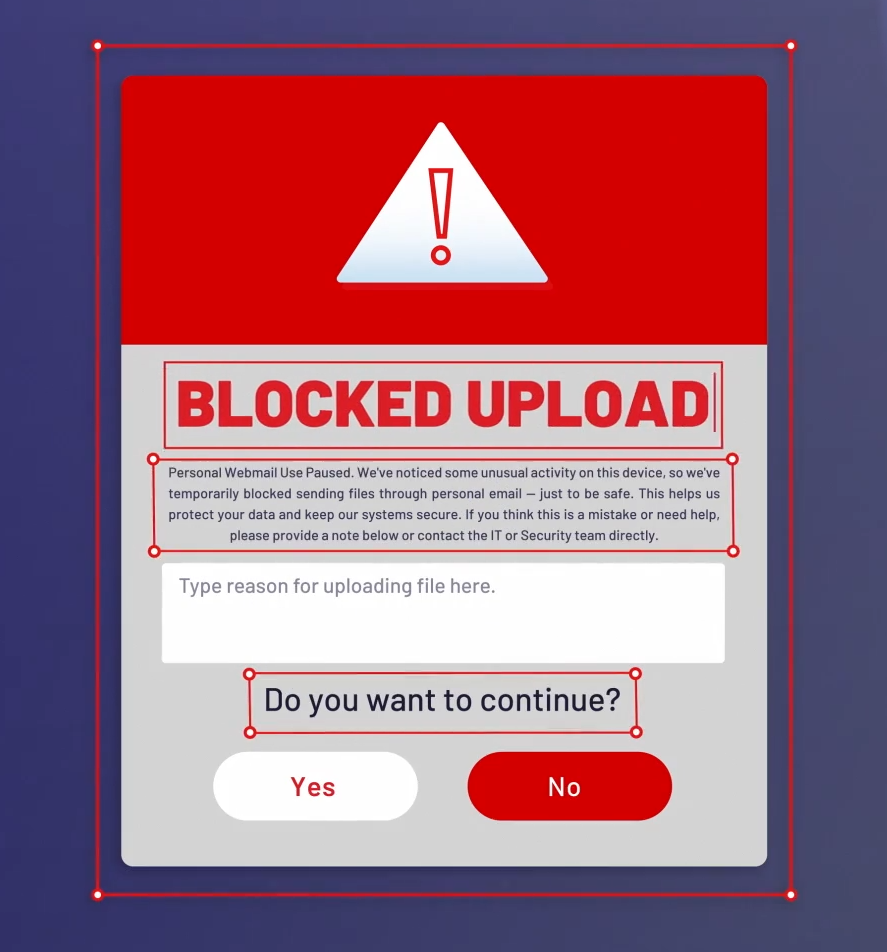

Risk-adaptive enforcement: coach, warn, block, and capture intent

Detection alone doesn’t stop data loss. The best programs change outcomes.

Risk-Adaptive DLP adjusts enforcement based on intent and context. As risk increases, controls tighten automatically inside the same risk-adaptive framework.

A practical ladder looks like this:

- Coaching: a short, contextual nudge when a user is about to take a risky step.

- Just-in-time warning: clear friction when behavior deviates from baseline.

- Block: a hard stop when indicators of intent cross the threshold your organization sets.

Users can provide justification, and security messages are customizable. A developer pushing code to an approved repository shouldn’t feel the same friction as a user pasting product roadmaps into personal webmail.

Risk-adaptive message justification

In other words, risk-adaptive enforcement supports precision by replacing one-size-fits-all DLP with interventions that match each moment.

GenAI governance is now part of DLP

GenAI is now a major data movement channel, and organizations are still working through how to govern sensitive data in GenAI workflows. Prompts can contain sensitive content, with outputs reintroducing that same content into downstream workflows. This activity spans sanctioned AI tools and shadow AI, across browsers, personal accounts, and non-browser utilities.

DTEX treats GenAI as a monitoring and control problem within the DLP program. DTEX Risk-adaptive DLP:

- Tracks GenAI usage across managed and unmanaged environments

- Blocks the upload of sensitive data into prompts based on both content and behavior

- Monitors AI-generated content and ties it back to users and risk context

Those signals feed the same behavioral story that powers DTEX risk groups. Users whose risk escalates because of data hoarding or abnormal access can be governed more tightly when they attempt to move data into an AI tool.

Unsupervised agents, especially those configured by enthusiastic builders outside security purview, can chain actions and touch regulated data. Without role-based policies, scoped credentials, and kill switches, you’re a prompt away from autonomous misbehavior.

For analysts: separate context from events, then move faster

DLP programs often fail in the SOC because analysts struggle to separate “what happened” from “why it matters.” They have events and logs, but they rarely have a clear, combined view of user risk and data activity.

Chapter two of “Meet Roger” highlights a redesigned Control Center that separates insider risk profiles from data loss events. That separation matters. Analysts can see a user’s risk trajectory, behavioral patterns, and current risk group next to a concrete event in the same interface.

In a legacy DLP console, an analyst might see only “file.zip uploaded to cloud storage” with limited context. With DTEX, that same event appears next to two weeks of abnormal data collection, new GenAI usage, and a shift from “Low” to “Watch” in the user’s risk group. That is the narrative investigators need to act faster and make better decisions.

Redesigned DTEX Control Center and Ai3 Risk Assistant

On top of the control center, DTEX pairs DLP workflows with AI-guided investigation support through the DTEX Ai3 Risk Assistant (Ai3). Ai3 uses behavioral metadata and risk modeling from the DTEX Platform to provide playbooks, summaries, and recommended next steps. This helps analysts move faster and make consistent decisions without missing sensitive data.

What to pressure test when evaluating modern DLP

If you are modernizing DLP, focus on operational details. Detailed questions surface real capability more quickly than a feature checklist. Evaluate the following:

- Can controls adapt automatically as risk changes, or does your team still live in a rule-tuning loop?

- Can you identify sensitive unstructured data, including images, video, and source code, without over-relying on deep content inspection?

- Can you govern GenAI workflows, including prompt uploads, across browsers, personal accounts, and non-browser utilities?

- Can analysts separate user risk context from DLP events, and can they accelerate investigations with guided workflows?

- Can you reduce false positives without creating blind spots?

Finally, ask how the system handles privacy. Data protection can’t come at the cost of workforce trust.

What this means for your DLP program

Every organization has a Roger. The question is whether your DLP program can see his whole story, understand behavior and intent, and respond in time.

Risk-adaptive DLP takes a fundamentally different approach to data loss prevention. It aligns controls with user behavior, risk trajectory, and GenAI usage rather than waiting for a final, obvious violation.

See how DTEX Risk Groups, GenAI governance, and the Ai3 Risk Assistant will support your environment.

Subscribe today to stay informed and get regular updates from DTEX